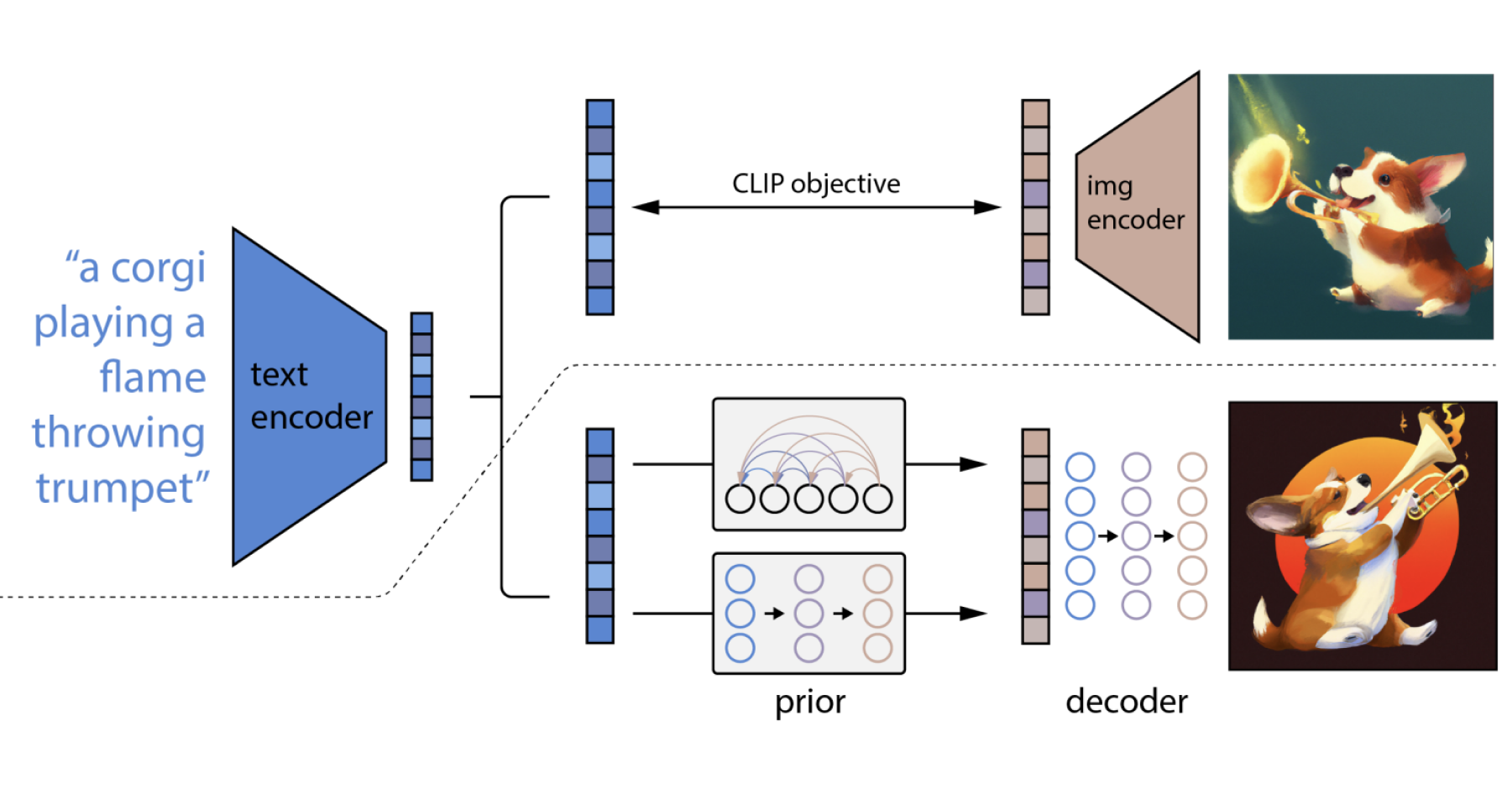

From DALL·E to Stable Diffusion: How Do Text-to-Image Generation Models Work? - Edge AI and Vision Alliance

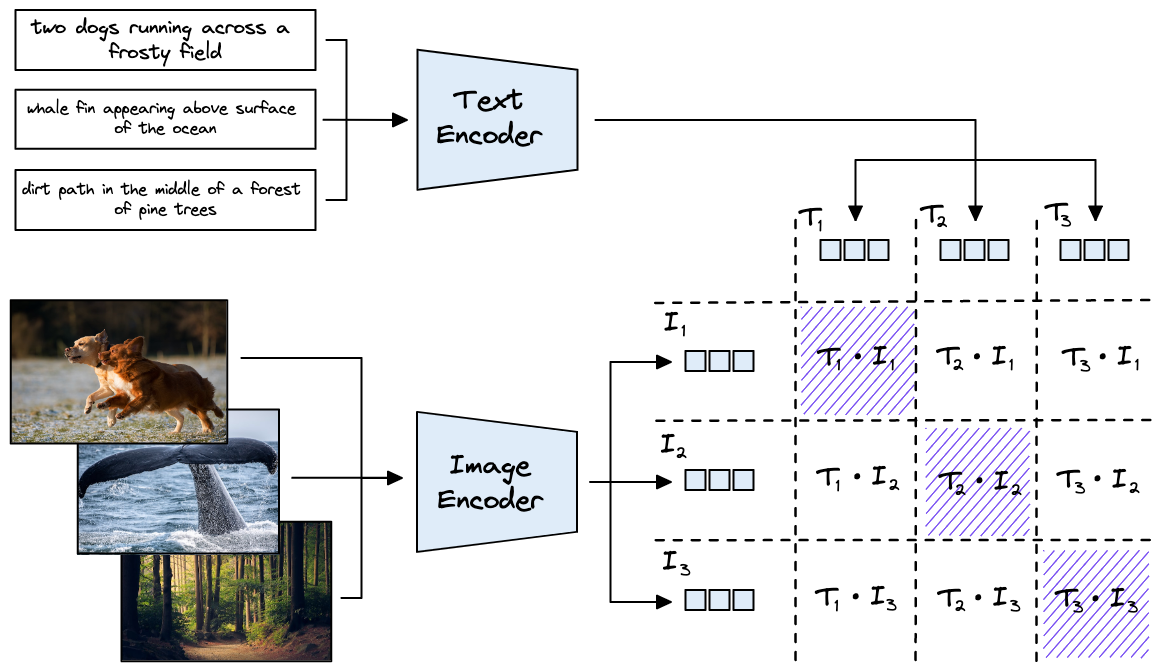

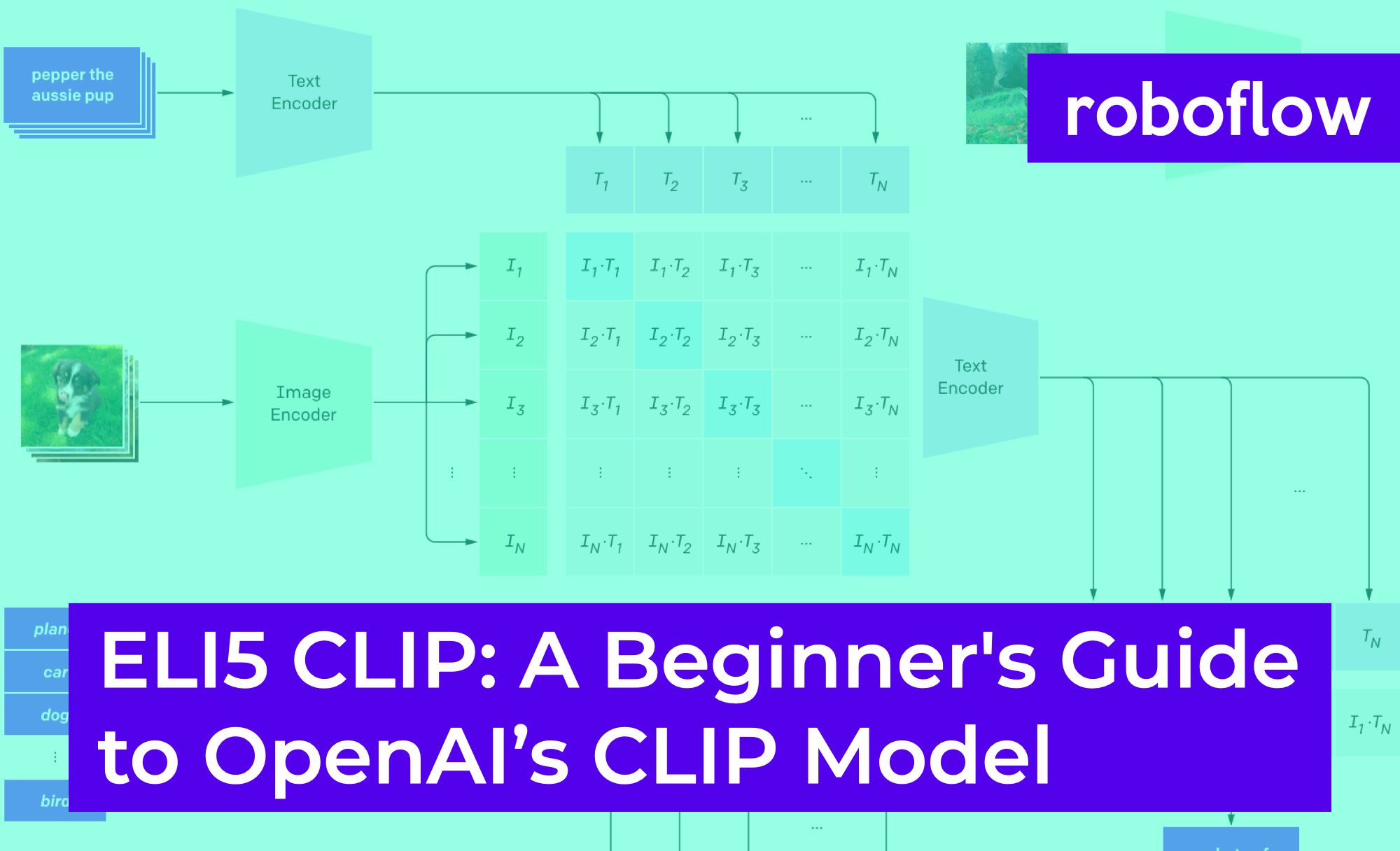

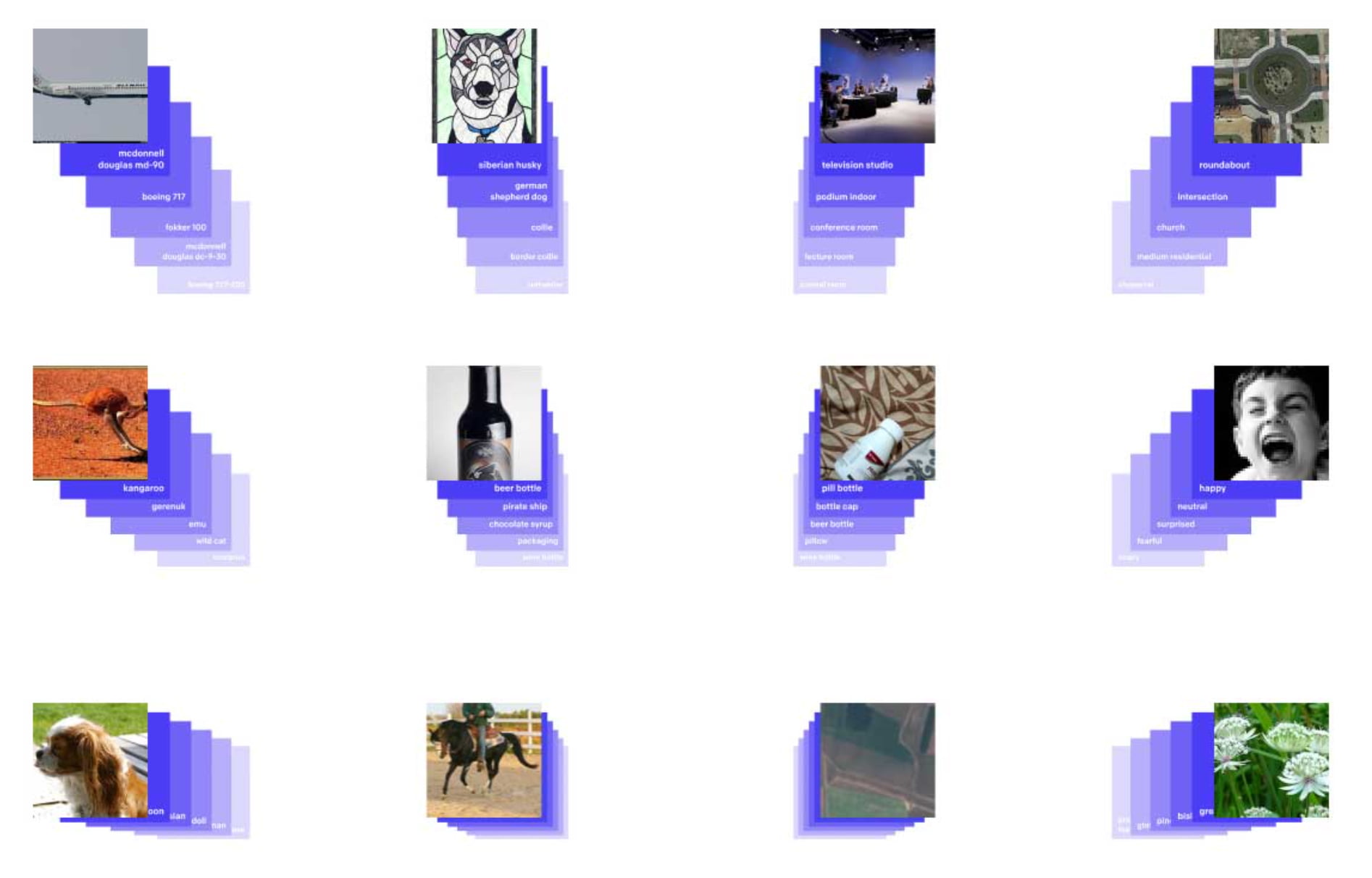

GitHub - openai/CLIP: CLIP (Contrastive Language-Image Pretraining), Predict the most relevant text snippet given an image

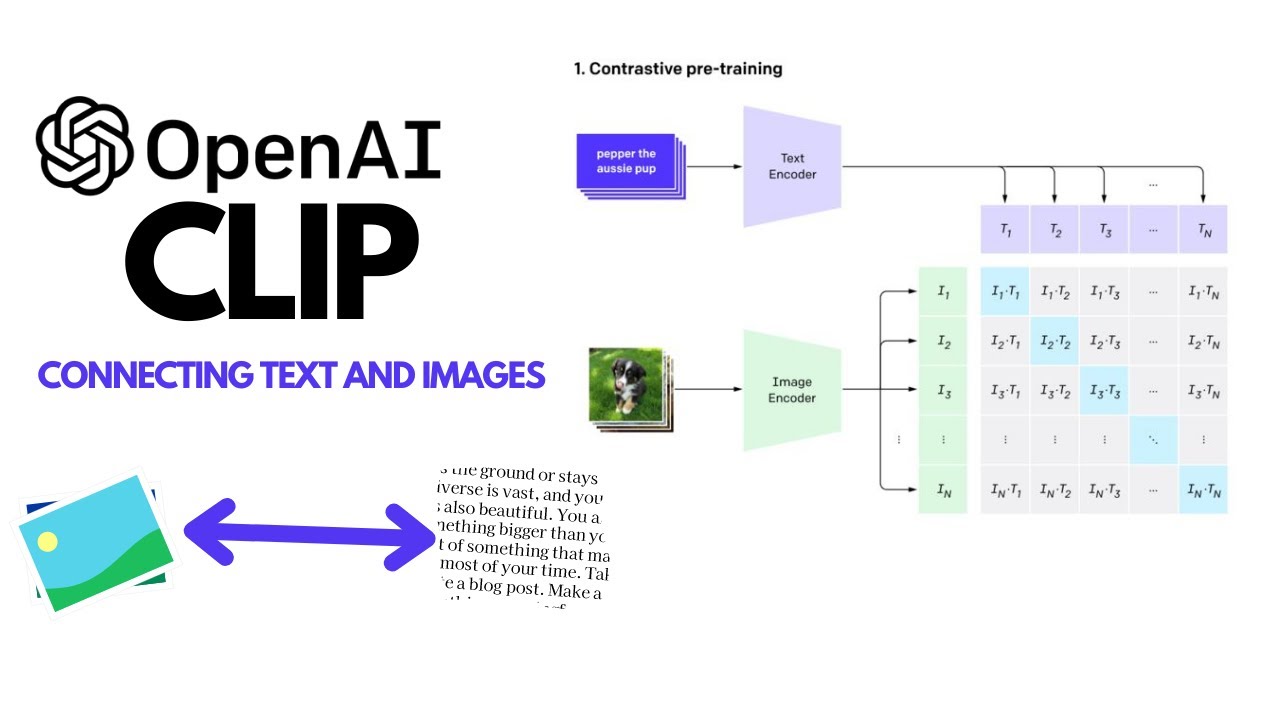

OpenAI's CLIP Explained and Implementation | Contrastive Learning | Self-Supervised Learning - YouTube

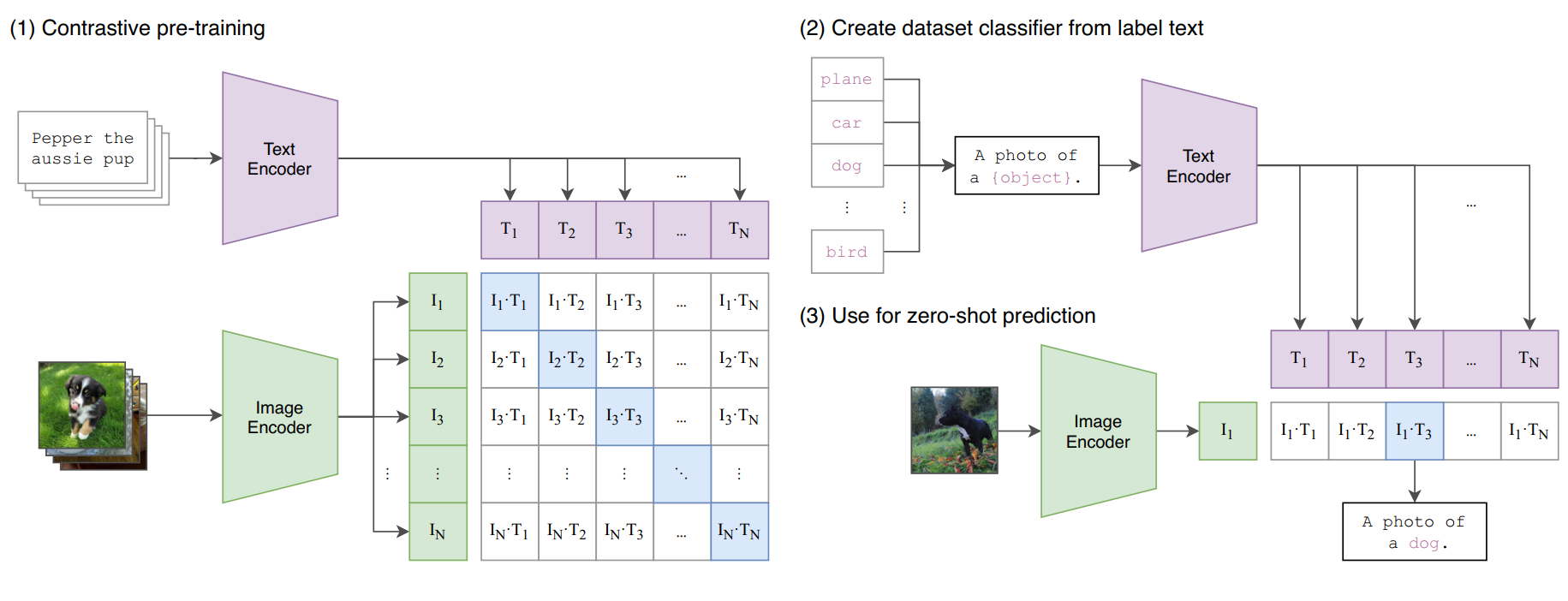

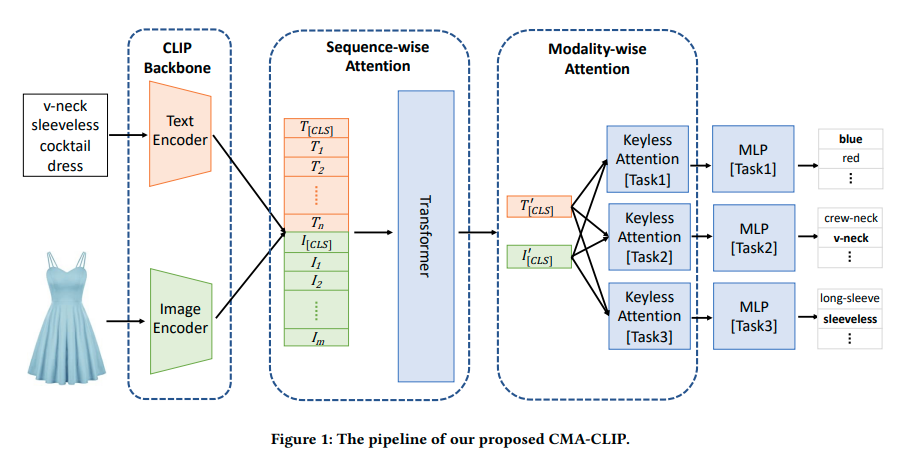

Process diagram of the CLIP model for our task. This figure is created... | Download Scientific Diagram